You need more candidates, or you need better candidates. These are separate problems. Yet most vendor messaging treats "AI recruitment" as a single capability, which leaves talent acquisition leaders struggling to evaluate tools against their actual bottleneck. This guide is published by Mokka, an AI candidate screening platform. We include ourselves alongside competitors and aim to be accurate about both our strengths and limitations.

The conflation makes sense historically. Five years ago, most recruiting tools did one thing: parse resumes slightly faster than a human could. But the market has split. Sourcing tools now crawl billions of profiles across the internet to find people who match your criteria. Screening tools evaluate whether the candidates you already have can actually do the job. The AI behind each is fundamentally different. The ROI calculation is fundamentally different. The risks are fundamentally different.

If you are evaluating AI tools for your hiring process, understanding this distinction is the difference between solving your problem and buying expensive software that addresses the wrong bottleneck.

How AI Sourcing Works

AI sourcing solves a pipeline problem. You have open roles and not enough qualified applicants. The technology works by aggregating candidate data from across the web — LinkedIn profiles, GitHub repositories, personal portfolios, conference speaker lists, patent databases — and matching those profiles against your job requirements.

The main approaches break down into three categories.

Aggregated search engines pull candidate data from public sources into a searchable database. You define parameters like job title, location, skills, and years of experience. The AI returns profiles that match. Tools like HireEZ and SeekOut operate primarily in this space. Their value is breadth: HireEZ claims access to over 800 million public profiles, while SeekOut indexes over 800 million as well.

Outreach automation layers engagement on top of search. Once the AI identifies matching profiles, it generates personalized outreach sequences — emails, LinkedIn messages, InMail , to start conversations. Gem and LeverTRM combine sourcing with these CRM capabilities. The AI optimizes send times, subject lines, and follow-up cadences based on response data.

Talent pooling and rediscovery focuses on candidates already in your system. The AI scans your existing ATS database to find past applicants or silver medalists who match new open roles. This is often the highest-ROI sourcing approach because you have already paid to acquire these candidates.

The buying decision for sourcing tools hinges on data coverage in your specific geography and industry, integration depth with your existing ATS, and whether your bottleneck is truly candidate volume. If you are receiving 200 qualified applicants per role, a sourcing tool will not help. If you are receiving five, it might.

How AI Screening Works

AI screening solves an evaluation problem. You have candidates , possibly too many , and need to determine which ones can actually do the work before investing human hours in interviews.

The approaches here are more varied and carry different tradeoffs.

Keyword matching and resume scoring is the oldest form of AI screening. The system parses resumes for relevant keywords, job titles, and experience patterns, then ranks candidates based on match percentage. Most ATS platforms include basic versions of this. The limitation is well-documented: keyword matching rewards resume formatting skills over actual capability. A candidate who lists "project management" twelve times outranks one who led complex projects but used different language.

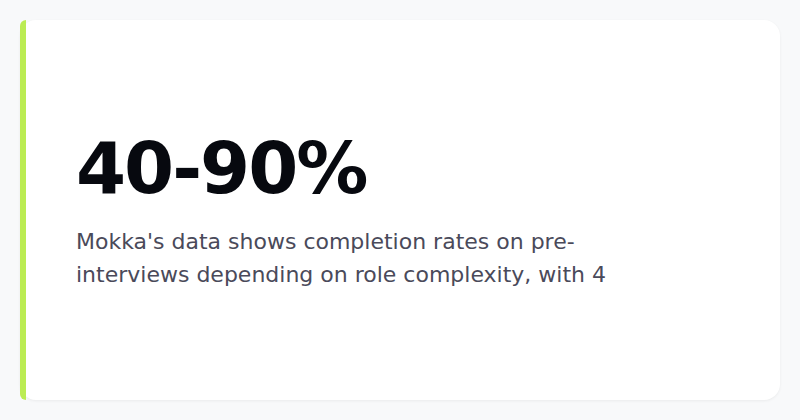

Structured pre-interviews use AI to conduct brief, standardized assessments before human interviews begin. Candidates respond to role-specific questions through text, voice, or video, and the AI evaluates responses against predefined criteria. Mokka operates in this category, alongside tools like Vervoe and TestGorilla. The goal is to move beyond resume claims to actual evidence of capability. Mokka's data shows 40-90% completion rates on pre-interviews depending on role complexity, with 4.7 out of 5 candidate satisfaction scores. The tradeoff is that these assessments add a step to the candidate experience, and poorly designed ones create friction that drops qualified candidates. Any tool in this category should demonstrate completion rates above 40% , below that suggests the assessment is too long, too confusing, or perceived as disrespectful of the candidate's time.

Skills assessments and work samples test specific competencies through coding challenges, writing exercises, case studies, or practical tasks. HackerRank, Codility, and similar platforms focus heavily on technical roles. These provide direct evidence of skill but work best for roles with clearly testable outputs. Assessing a product manager's strategic thinking through a timed multiple-choice test is less effective than assessing a JavaScript developer's coding ability through a practical exercise.

Predictive analytics uses historical hiring data to identify patterns that correlate with job performance. If your top performers over the past five years share certain characteristics , specific career trajectories, educational backgrounds, or skill combinations , the AI flags candidates with similar profiles. This approach carries the highest bias risk because it optimizes for your existing workforce composition, which may already reflect historical inequities.

Why the Distinction Matters for Your Tech Stack

Treating sourcing and screening as interchangeable leads to two common and expensive mistakes.

Buying sourcing when you need screening. Many companies already have more applicants than they can process. Their ATS is full of resumes that no one has time to review. Adding a sourcing tool to generate even more applications without a screening mechanism is like opening a wider faucet into a clogged drain. The 2025 SHRM Talent Trends analysis emphasizes that current talent challenges require understanding recruiting difficulties and skill-demand shifts as distinct problems requiring distinct strategies. Volume is not the same as quality.

Buying screening when you need sourcing. Conversely, if you have twenty applicants for a role that requires one hundred, implementing an AI screening tool gives you a beautifully ranked list of twenty people. You still have an eighty-person deficit. The screening is academically sound but operationally useless.

The ROI calculation differs as well. Organizations report $3.70 returned for every $1 invested in AI recruitment tools according to theaiuniversity.com, but that aggregate number obscures the breakdown. Sourcing ROI is relatively straightforward to measure: cost per qualified candidate acquired, time to build a pipeline of sufficient size. Screening ROI is more nuanced: time saved per hire, quality-of-hire metrics at 90 days and six months, reduction in early turnover. Jason Leong from LinkedIn notes that speed is the most commonly cited benefit when organizations implement both sourcing and screening AI together, with dramatically faster hiring processes. But speed without accuracy is just faster bad hiring.

Key Evaluation Criteria

When evaluating tools in either category, specific benchmarks separate functional platforms from expensive shelfware.

For Sourcing Tools

Data freshness. Ask vendors how frequently their candidate data is updated. A profile aggregated eighteen months ago may be irrelevant if the candidate has changed roles, companies, or locations. Look for data refreshes measured in weeks, not quarters.

Geographic and industry coverage. A sourcing tool with strong coverage in US technology markets may be nearly useless for manufacturing roles in Southeast Asia. Request specific numbers for your target candidate profiles, not aggregate database size.

Integration depth. API-level sync with your ATS means sourced candidates flow directly into your existing workflows. CSV export means someone on your team will manually transfer data. The difference is automation versus data entry.

For Screening Tools

Completion rates above 40%. Anything below suggests the assessment creates unacceptable friction. Candidates abandon processes that feel excessive, particularly in competitive talent markets where they have options.

Validation methodology. Ask vendors how they validate that their assessments predict job performance. Tools that rely solely on vendor claims without third-party validation or client-specific benchmarking are guessing.

Bias auditing. AI screening carries compliance risks that sourcing does not. Under the EU AI Act and New York City's AEDT law, employers face specific obligations around automated employment decisions. Ask vendors for documentation of regular bias audits, disparate impact analysis, and accommodation procedures for candidates with disabilities.

Approaches Compared

Resume Screening (Keyword Matching)

Tools: Most major ATS platforms (Greenhouse, Lever, Workday) include basic versions. Standalone options include CVViZ and HireAbility.

Best for: High-volume roles where resumes contain standardized information and the primary need is triage.

Limitation: Rewards resume optimization over actual capability. AI resume screening achieves 89-94% accuracy rate according to theaiuniversity.com, but that accuracy measures how well the system matches keywords to a job description, not how well it predicts job performance.

Evidence-Based Screening (Pre-Interviews)

Tools: Mokka, Vervoe, TestGorilla, Willo.

Best for: Roles where past experience listed on a resume is an unreliable predictor of actual performance , management positions, cross-functional roles, career changers, or any position where how someone thinks matters more than what they have done.

Limitation: Adds a step to the candidate experience. Mokka is an early-stage company founded in October 2023, so enterprise buyers should evaluate whether the platform's maturity matches their compliance and support requirements. Seat-based pricing can also become expensive for large teams compared to per-assessment or flat-rate models.

Skills Assessment

Tools: HackerRank, Codility, CodeSignal for technical roles; eSkill, Criteria for broader applications.

Best for: Roles with clearly testable, objective outputs. Software engineering is the obvious use case, but this also applies to data analysis, copywriting, graphic design, and accounting.

Limitation: Poor fit for roles requiring soft skills, strategic thinking, or complex stakeholder management. A timed coding test tells you whether someone can write a sorting algorithm under pressure; it does not tell you whether they can collaborate with a product team to scope a feature.

AI Sourcing (Aggregated Search)

Tools: HireEZ, SeekOut, LinkedIn Recruiter with AI features, Entelo (now part of Bullhorn).

Best for: Organizations with specific, hard-to-fill roles where the qualified candidate pool is small and passive. Niche technical roles, senior leadership positions, specialized medical or legal positions.

Limitation: Does not evaluate candidate quality or interest. Sourcing identifies people who look right on paper; it does not confirm they can do the job or want to work for your company.

Combined Sourcing and Screening

Tools: Some platforms are beginning to bridge both capabilities. Mokka offers sourcing access to over 850 million passive candidate profiles alongside its screening functionality. Eightfold AI and Beamery also operate across both stages.

Best for: Organizations that want a unified workflow from candidate identification through evaluation, particularly mid-size companies building their first AI recruiting stack.

Limitation: Combined tools often excel in one area and are adequate in the other. Evaluate each capability independently rather than assuming strength in one implies strength in both. For executive search specifically, specialized tools may outperform combined platforms.

What to Watch Out For

Hidden costs. Per-assessment pricing seems reasonable until you scale. A screening tool that charges $15 per assessment costs $15,000 per month if you assess 1,000 candidates. Sourcing tools that charge per contact reveal can become unpredictable. Ask for total cost modeling based on your actual hiring volume, not vendor-provided averages.

Vendor lock-in. Some platforms create closed ecosystems where your sourcing data, screening results, and candidate communications live inside their proprietary system. If you leave, you lose the historical data. Clarify data portability before signing, and be specific about what happens to your candidate database if the contract ends.

Compliance risk asymmetry. AI sourcing carries relatively low compliance risk , you are identifying potential candidates, not making employment decisions. AI screening carries significantly higher risk because it directly influences who gets interviewed and hired. Under the EU AI Act, AI systems used for recruitment and personnel selection are classified as high-risk, requiring specific transparency and oversight measures. New York City's AEDT law requires bias audits for automated employment decision tools. The legal landscape is evolving quickly, and tools that comply today may not comply with regulations enacted next year. Ask vendors about their compliance roadmap, not just their current status.

The bias problem in screening specifically. The AI University calls HR "one of the most contentious" sectors for AI adoption, and for good reason. Screening AI that learns from historical hiring data will replicate historical hiring patterns , including any biases present in those patterns. A tool that perfectly predicts who your company has historically hired will perfectly predict more people who look like your current workforce. This is technically accurate and ethically problematic. Peter Leffkowitz from the Elite Recruiter Podcast raises a related concern: rapid AI-driven placements can lead to superficial matches rather than quality hires. Speed without critical evaluation of what the AI is improving for creates risk.

Integration gaps. Vendors often claim integration with major ATS platforms. The depth of those integrations varies enormously. A native integration with two-way sync is different from a Zapier connector that moves data in one direction. Before evaluating features, confirm that the tool connects meaningfully with your specific ATS version and configuration. Ask to speak with a reference customer using the same ATS you use.

The Risk of Speed Without Depth

National Search Group frames AI tools as automating repetitive tasks , resume screening, interview scheduling, sourcing , which frees recruiters to focus on strategy and relationships. This framing is accurate but incomplete. Automation of repetitive tasks is valuable, but the deeper question is what the AI enables that was not possible before.

AI adoption in recruiting reduces time-to-hire by 50% according to theaiuniversity.com. That number is real and significant. But reducing time-to-hire by half only helps if the candidates you hire faster are good hires. If your screening process was previously identifying the wrong candidates, doing it faster does not improve outcomes.

The contrarian perspective , one that most vendor content avoids , is that speed is the wrong metric to improve first. Accuracy and fairness should come before velocity. Once you have a screening process that reliably identifies strong candidates without systematic bias, accelerating that process is genuinely valuable. Accelerating a flawed process just produces flawed results faster.

This is why understanding the sourcing-screening distinction matters practically. Sourcing is a velocity problem: how quickly can you identify enough potential candidates. Screening is an accuracy problem: how reliably can you evaluate which candidates will succeed. improving velocity when your constraint is accuracy wastes money. improving accuracy when your constraint is velocity wastes time.

Conclusion

If you are drowning in unreviewed applications, your problem is screening. Look at evidence-based screening tools that reduce your evaluation time without sacrificing candidate quality. Focus on completion rates, validation methodology, and bias auditing.

If you cannot find enough candidates for open roles, your problem is sourcing. Look at aggregated search tools with strong coverage in your specific geography and industry. Focus on data freshness, outreach capabilities, and ATS integration depth.

If you have both problems , not enough candidates for some roles, too many unreviewed applications for others , you need both capabilities. Evaluate them separately. A tool that sources well but screens poorly gives you more candidates and no better way to evaluate them. A tool that screens well but sources poorly gives you better evaluation of an insufficient pipeline.

Start by mapping your actual bottleneck. Then evaluate tools against that specific problem. The best AI recruitment stack is the one that addresses your constraint, not the one with the most features.